Enterprise AI Adoption & Strategy Consulting

Enterprise AI adoption has a dirty secret: most organisations are not failing at AI because they lack technology or budget. They are failing at strategy, sequencing, and change management.

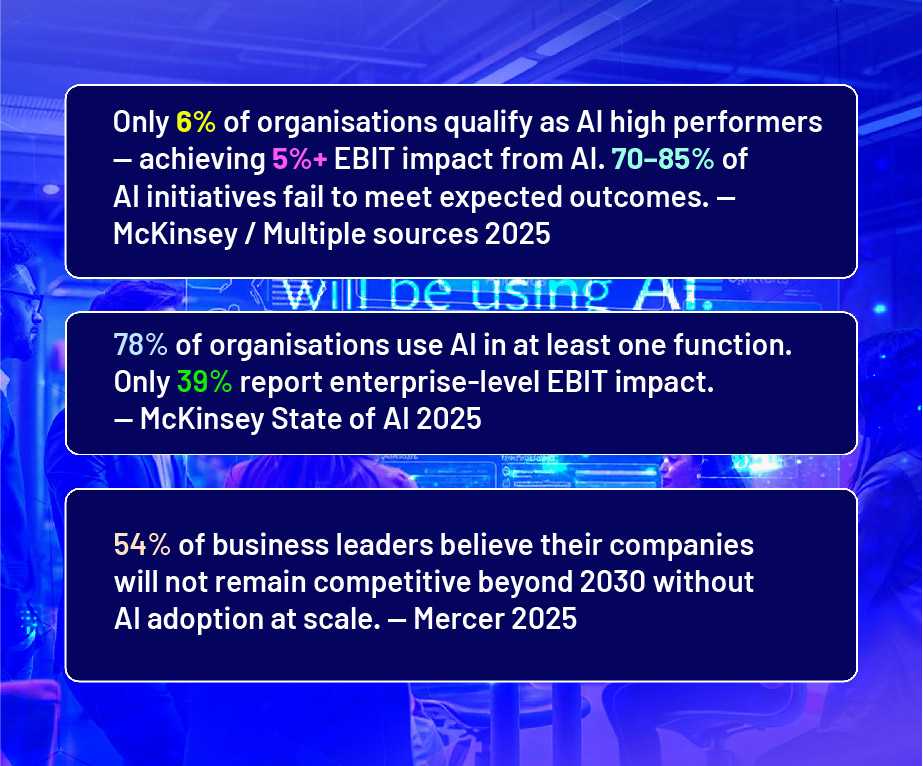

McKinsey's 2025 State of AI report found that while 78% of organisations use AI in at least one business function, only 39% report enterprise-level EBIT impact. The gap between "using AI" and "being transformed by AI" is large — and it is almost entirely a leadership and strategy problem, not a technology problem.

The enterprises that are pulling ahead are not the ones with the most advanced AI tools. They are the ones that have done four things well: identified the right use cases for their specific business context, built governance frameworks that enable responsible deployment, invested in workforce capability alongside technology, and measured outcomes rigorously enough to justify scaling. Amit Jadhav's enterprise AI adoption consulting is built to help leadership teams do exactly these four things — with a practical, hands-on advisory model that combines AI strategy with the implementation expertise to make it real.

Why AI Adoption Fails in Most Enterprises

Understanding the most common failure modes isthe fastest way to avoid them. Based on advisory work across enterprises in India and globally, and consistent with the findings of McKinsey, Deloitte, and IBM research, the five most common reasons AI adoption fails are:

- Strategy without specificity: LeadershipsetsanAI ambition ("beanAI-first organisation") without translatingitintospecific use cases,business units,or functions with defined targets. This produces paralysis, not progress.

- Technology before readiness: Organisations buy AI platforms or sign large vendor contracts before assessing whether their data, processes, and teams are ready to use them. IBM research found 42% of organisations cannot properly customise AI models due to poor-quality data.

- Pilots that never scale: The average organisation scrapped 46% of AI proof-of-concepts before production in 2025. Most pilots succeed in narrow demos but fail at scale because change management, integration, and governance were not built into the design from the start.

- Skills gap ignored: 46% of leaders identify skill gaps as a significant barrier to AI adoption. Buying technology without upskilling the teams who need to use it produces shelfware, not transformation.

- No measurement framework: Without a clear baseline and defined KPIs before launch, it is impossible to demonstrate ROI — which means budget cycles and leadership support dry up before the initiative has time to prove its value.

The Enterprise AI Adoption Framework: Assess, Pilot, Scale

Phase 1

AI Readiness Assessment (Weeks 1–4)

Before any use case is selected or any technology is evaluated, the organisation needs an honest picture of its current AI position. The assessment covers:

- Use case inventory: What AI opportunities exist across functions? Which are highest priority by value and feasibility?

- Data readiness: Is the data required for priority usecases available, clean, and accessible?

- Workforce readiness: What is the current AI skill level across functions? Where are the critical gaps?

- Technology stack: What AI tools and platforms are already in place? What integrations are required?

- Governance readiness: What policies, approval structures, and risk frameworks exist for AI deployment?

Output: An AI Readiness Scorecard and a prioritised use case shortlist. This becomes the foundation for everything that follows

Phase 2

Pilots and First Wins(Weeks 5–14)

The fastest path to board support and budget commitment is a small number of visible wins, delivered quickly. The pilot phase selects 2–3 use cases from the shortlist — typically a mix of quick wins (high frequency, low complexity) and a strategic priority (higher value, medium complexity).

Each pilot follows the structured deployment blueprint: process map → tool selection → build → parallel test → live deployment → measure. The key discipline here is not to over-engineer: a working 80% solution deployed in 8 weeks beats a perfect solution still in development at week 24.

Phase 3

Scale, Replicate, and Build Internal Capability (Weeks 15–52)

Once pilots have validated the blueprint and delivered measurable ROI, the focus shifts to two parallel workstreams: scaling the proven use cases and building the internal capability to run future AI initiatives without external support.

This is where most consulting engagements end — and where Amit's approach differs. The goal is not to create a dependency on external consultants. It is to leave the organisation with arepeatable process, a trained internal AI champion or team, and a governance framework that enables responsible scaling.

AI Use Cases Across Business Functions

SalesandRevenue:

- AI-assisted prospecting and outreach personalisation (AI agents)

- CRM automation: call summaries, follow-up generation, deal stage tracking

- Proposal and pricing document generation

- Sales coaching: automated call analysis and rep performance feedback

Marketing:

- Content pipeline automation: brief → draft → review → publish → report

- Campaign performance reporting and optimisation recommendations

- Lead scoring, nurture sequencing, and conversion tracking

- SEO and content gap analysis with AI-generated content briefs

Operations and Supply Chain:

- Demand forecasting and inventory optimisation

- Procurement automation and supplier communication

- Quality control: anomaly detection and automated reporting

- Process documentation and SOPs generation from operational data

HR and People:

- Recruitment: screening, interview scheduling, candidate summary generation

- Onboarding automation: task sequences, document collection, policy Q&A

- L&D: AI-assisted learning path creation and skills gap identification

- Attrition risk modelling and retention intervention design

Finance:

- Invoice processing and accounts payable automation

- Management reporting: automated data aggregation and narrative generation

- Fraud and anomaly detection in transaction data

- Compliance reporting and audit trail generation

Engagement Models

AI Strategy Sprint (6 weeks):

Rapid assessment and roadmap development. Delivers: AI readiness scorecard, prioritised use case list, 90-day pilot plan, governance framework outline. Ideal for organisations that need executive alignment before committing to a broader programme.

Pilot Programme (12–16 weeks):

Full assessment plus the design and deployment of 2–3 live AI automations or agents. Delivers: working automations in production, ROI measurement framework, team training, pilot learnings documented. Ideal for organisations ready to move from discussion to deployment.

Ongoing Advisory (Monthly retainer):

Ongoing strategic support for organisations mid- transformation. Covers: monthly roadmap reviews, emerging use case evaluation, governance updates, and leadership briefings. Ideal for organisations that have completed a pilot and are scaling.

Masterclass + Consulting Bundle:

For organisations that want both capability building (masterclass for teams) and strategic deployment (consulting engagement). Combined programmes typically show faster results because the workforce upskilling and the use case deployment happen in parallel.

Frequently Asked Questions — Enterprise AI Adoption Consulting

Q. How is Amit Jadhav's AI consulting different from large consultancy firms?

A.Three key differences: speed (advisory programmes start delivering working outputs in weeks, not quarters), business-first focus (every engagement is anchored in specific business outcomes — revenue, cost, productivity — not technology architecture), and no-code approach (solutions are designed to be owned and extended by the client's own team, not dependent on technical specialists or the consulting partner to maintain).

Q. We have already invested in AI tools but aren't seeing results. Can you help?

A.This is one of the most common starting points. Tool investment without strategic sequencing and workforce readiness is the primary reason AI initiatives underperform. The AI Readiness Assessment diagnoses exactly where the gap is — whether data quality, process design, governance, skills, or change management — and creates a corrective roadmap. Most organisations in this situation are 8–12 weeks from meaningful results with the right advisory support.

Q. How do we know which AI use cases to prioritise?

A.The use case selection framework evaluates three factors: business impact (value of the outcome if the process is automated), feasibility (data readiness, process structure, tool availability), and strategic alignment (does this use case build internal capability for future scaling?). The highest-priority use cases sit at the intersection of high impact and high feasibility — these are the pilots that build momentum and board confidence.

Q. What does success look like at the end of an engagement?

A.At minimum: 2–3 AI automations or agents deployed in production with documented ROI. A 90- day roadmap for the next phase. An internal AI champion or team trained to manage and extend the deployed solutions. A governance framework covering authorisation, auditability, and risk management. For longer engagements: enterprise-wide AI skill baseline established, with a structured upskilling programme in place across key functions.

Q. Do you work with specific industries?

A.Amit's consulting work spans professional services, manufacturing, financial services, technology, healthcare, and consumer businesses in India and the USA. The AI adoption framework is industry-agnostic; the use cases and examples are tailored to the client's sector and specific function priorities.

Q. Can the engagement be conducted virtually for our US-based or international team?

A.Yes. All engagement models are available in fully virtual format. Assessment interviews, workshop sessions, pilot design reviews, and ongoing advisory calls are conducted via video conference with shared documentation platforms. Many of the most successful engagements have been delivered entirely virtually for teams spread across multiple time zones.